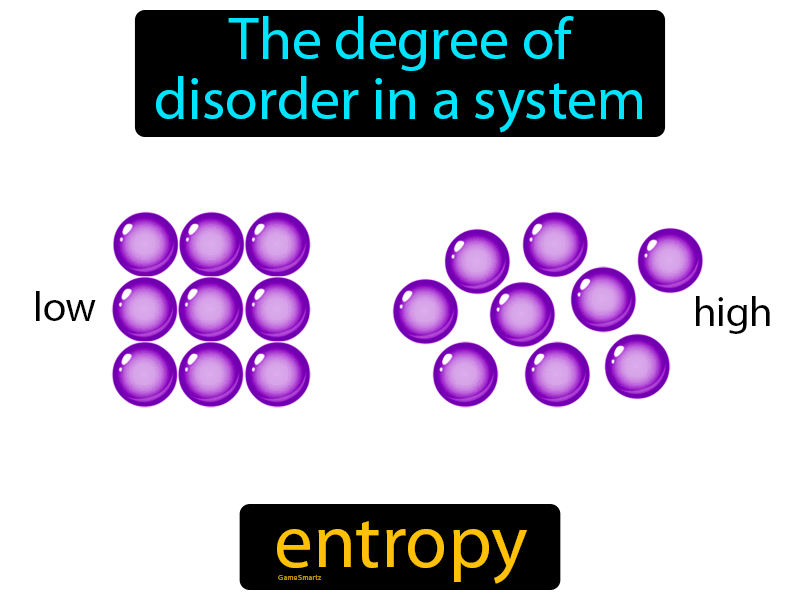

The rise in temperature caused by a given quantity of heat will be different for different substances and depends on the heat capacity of the substance. As energy, in any form is supplied to a system, its molecules begin to rotate, vibrate and translate, which is observable as a rise in temperature. In classical terms, systems at absolute zero have no energy and the atoms or molecules would be close packed together. The first law deals with the conservation of energy, the second law is concerned with the direction in which spontaneous reactions go, and the third law establishes that at absolute zero, all pure substances have the same entropy, which is taken, by convention, to be zero. Alongside this it is important to bear in mind the three laws of thermodynamics. We prefer to consider that the entropy of a system corresponds to the molecular distribution of its molecular energy among the available energy levels and that systems tends to adopt the broadest possible distribution. Secondly, the equation ( ii) defining entropy change does not recognise that the system has to be at equilibrium for it to be valid. First the units of entropy are Joules per Kelvin but the degree of disorder has no units. This more modern approach has two disadvantages. However, it is more common today to find entropy explained in terms of the degree of disorder in the system and to define the entropy change, Δ S, as: Where Q is the quantity of heat and T the thermodynamic temperature. Many earlier textbooks took the approach of defining a change in entropy, Δ S, via the equation: Generations of students struggled with Carnot's cycle and various types of expansion of ideal and real gases, and never really understood why they were doing so. The concept of entropy emerged from the mid-19th century discussion of the efficiency of heat engines. Entropy is a thermodynamic property, like temperature, pressure and volume but, unlike them, it can not easily be visualised. Thermodynamic properties depend on the current state of the system but not on its previous history and are either extensive - their values depend on the amount of substance comprising the system, eg volume - or intensive - their values are independent of the amount of substance making up the system, eg temperature and pressure. Thermodynamics deals with the relation between that small part of the universe in which we are interested - the system - and the rest of the universe - the surroundings.

There might be decreases in freedom in the rest of the universe, but the sum of the increase and decrease must result in a net increase.Entropy is dynamic - something which static scenes don't reflect The freedom in that part of the universe may increase with no change in the freedom of the rest of the universe. Statistical Entropy - Mass, Energy, and Freedom The energy or the mass of a part of the universe may increase or decrease, but only if there is a corresponding decrease or increase somewhere else in the universe.Qualitatively, entropy is simply a measure how much the energy of atoms and molecules become more spread out in a process and can be defined in terms of statistical probabilities of a system or in terms of the other thermodynamic quantities. Statistical Entropy Entropy is a state function that is often erroneously referred to as the 'state of disorder' of a system.Phase Change, gas expansions, dilution, colligative properties and osmosis.

Simple Entropy Changes - Examples Several Examples are given to demonstrate how the statistical definition of entropy and the 2nd law can be applied.A microstate is one of the huge number of different accessible arrangements of the molecules' motional energy* for a particular macrostate. Instead, they are two very different ways of looking at a system. Microstates Dictionaries define “macro” as large and “micro” as very small but a macrostate and a microstate in thermodynamics aren't just definitions of big and little sizes of chemical systems.“Disorder” was the consequence, to Boltzmann, of an initial “order” not - as is obvious today - of what can only be called a “prior, lesser but still humanly-unimaginable, large number of accessible microstate

it was his surprisingly simplistic conclusion: if the final state is random, the initial system must have been the opposite, i.e., ordered.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed